Now, the obvious solution is to just create a new queue with no user group specified and give it the remaining amount of memory so that it all adds up to 100%. The final queue may not contain User Groups or Query Groups. The following problems must be corrected before you can save this workload configuration: In multi-node clusters, failed nodes are automatically replaced.I want to update the WLM configuration for my Redshift cluster, but I am unable to make changes and save them due to the following message displayed: To recover a single-node cluster, restore a snapshot. When this happens, the cluster is in "hardware-failure" status. Issues on the cluster itself, such as hardware issues, might cause the query to freeze. For more information, see Connecting from outside of Amazon EC2 -firewall timeout issue. Check for conflicts with networking components, such as inbound on-premises firewall settings, outbound security group rules, or outbound network access control list (network ACL) rules. If an Amazon Redshift server has a problem communicating with your client, then the server might get stuck in the "return to client" state. For more information about query hopping, see WLM query queue hopping. To prevent queries from hopping to another queue, configure the WLM queue or WLM query monitoring rules. After the query runs: query STL_WLM_QUERY.While the query is running: query STV_WLM_QUERY_STATE.To confirm whether the query hopped to the next queue: If a read query reaches the timeout limit for its current WLM queue, or if there's a query monitoring rule that specifies a hop action, then the query is pushed to the next WLM queue.

For more information about checking for locks, see How do I detect and release locks in Amazon Redshift? A query hopped to another queue If the query is visible in STV_RECENTS, but not in STV_WLM_QUERY_STATE, the query might be waiting on a lock and hasn't entered the queue.

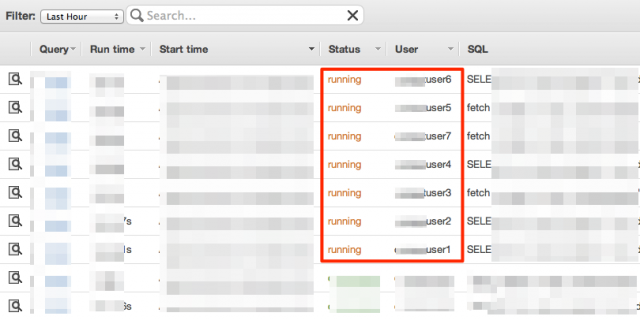

Query STV_WLM_QUERY_STATE to see queuing time: select * from STV_WLM_QUERY_STATE where query = your_query_id The query is waiting on a lock The query spends time queuing prior to running You can find additional information in STL_UNDONE. You can view rollbacks by querying STV_EXEC_STATE. If a data manipulation language (DML) operation encounters an error and rolls back, the operation doesn't appear to be stopped because it is already in the process of rolling back. The return to the client from the leader node.The return to the leader node from the compute nodes.Check STV_EXEC_STATE to see if the query has entered one of these return phases: Here are some common reasons why a query might appear to run longer than the WLM timeout period: The query is in the "return" phase Use the STV_EXEC_STATE table for the current state of any queries that are actively running on compute nodes: select * from STV_EXEC_STATE where query = your_query_id ORDER BY segment, step, slice Use this query for more information about query stages: select * from SVL_QUERY_REPORT where query = your_query_id ORDER BY segment, step, slice To view the status of a running query, query STV_INFLIGHT instead of STV_RECENTS: select * from STV_INFLIGHT where query = your_query_id For more information about query planning, see Query planning and execution workflow. However, the query doesn't use compute node resources until it enters STV_INFLIGHT status. When the query is in the Running state in STV_RECENTS, it is live in the system. When querying STV_RECENTS, starttime is the time the query entered the cluster, not the time that the query begins to run. For example, the query might wait to be parsed or rewritten, wait on a lock, wait for a spot in the WLM queue, hit the return stage, or hop to another queue. If WLM doesn’t terminate a query when expected, it’s usually because the query spent time in stages other than the execution stage. A WLM timeout applies to queries only during the query running phase.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed